bahmanm

Husband, father, kabab lover, history buff, chess fan and software engineer. Believes creating software must resemble art: intuitive creation and joyful discovery.

Views are my own.

- 54 Posts

- 127 Comments

Good question!

IMO a good way to help a FOSS maintainer is to actually use the software (esp pre-release) and report bugs instead of working around them. Besides helping the project quality, I’d find it very heart-warming to receive feedback from users; it means people out there are actually not only using the software but care enough for it to take their time, report bugs and test patches.

“Announcment”

It used to be quite common on mailing lists to categorise/tag threads by using subject prefixes such as “ANN”, “HELP”, “BUG” and “RESOLVED”.

It’s just an old habit but I feel my messages/posts lack some clarity if I don’t do it 😅

1·1 year ago

1·1 year agoI usually capture all my development-time “automation” in Make and Ansible files. I also use makefiles to provide a consisent set of commands for the CI/CD pipelines to work w/ in case different projects use different build tools. That way CI/CD only needs to know about

make build,make test,make package, … instead of Gradle/Maven/… specific commands.Most of the times, the makefiles are quite simple and don’t need much comments. However, there are times that’s not the case and hence the need to write a line of comment on particular targets and variables.

1·1 year ago

1·1 year agoCan you provide what you mean by check the environment, and why you’d need to do that before anything else?

One recent example is a makefile (in a subproject), w/ a dozen of targets to provision machines and run Ansible playbooks. Almost all the targets need at least a few variables to be set. Additionally, I needed any fresh invocation to clean the “build” directory before starting the work.

At first, I tried capturing those variables w/ a bunch of

ifeqs,shells anddefines. However, I wasn’t satisfied w/ the results for a couple of reasons:- Subjectively speaking, it didn’t turn out as nice and easy-to-read as I wanted it to.

- I had to replicate my (admittedly simple)

cleantarget as a shell command at the top of the file.

Then I tried capturing that in a target using

bmakelib.error-if-blankandbmakelib.default-if-blankas below.############## .PHONY : ensure-variables ensure-variables : bmakelib.error-if-blank( VAR1 VAR2 ) ensure-variables : bmakelib.default-if-blank( VAR3,foo ) ############## .PHONY : ansible.run-playbook1 ansible.run-playbook1 : ensure-variables cleanup-residue | $(ansible.venv) ansible.run-playbook1 : ... ############## .PHONY : ansible.run-playbook2 ansible.run-playbook2 : ensure-variables cleanup-residue | $(ansible.venv) ansible.run-playbook2 : ... ##############But this was not DRY as I had to repeat myself.

That’s why I thought there may be a better way of doing this which led me to the manual and then the method I describe in the post.

running specific targets or rules unconditionally can lead to trouble later as your Makefile grows up

That is true! My concern is that when the number of targets which don’t need that initialisation grows I may have to rethink my approach.

I’ll keep this thread posted of how this pans out as the makefile scales.

Even though I’ve been writing GNU Makefiles for decades, I still am learning new stuff constantly, so if someone has better, different ways, I’m certainly up for studying them.

Love the attitude! I’m on the same boat. I could have just kept doing what I already knew but I thought a bit of manual reading is going to be well worth it.

1·1 year ago

1·1 year agoThat’s a great starting point - and a good read anyways!

Thanks 🙏

1·1 year ago

1·1 year agoAgree w/ you re trust.

1·1 year ago

1·1 year agoThanks. At least I’ve got a few clues to look for when auditing such code.

3·1 year ago

3·1 year agoUpdate

sh.itjust.works in now added to lemmy-meter 🥳 Thanks all.

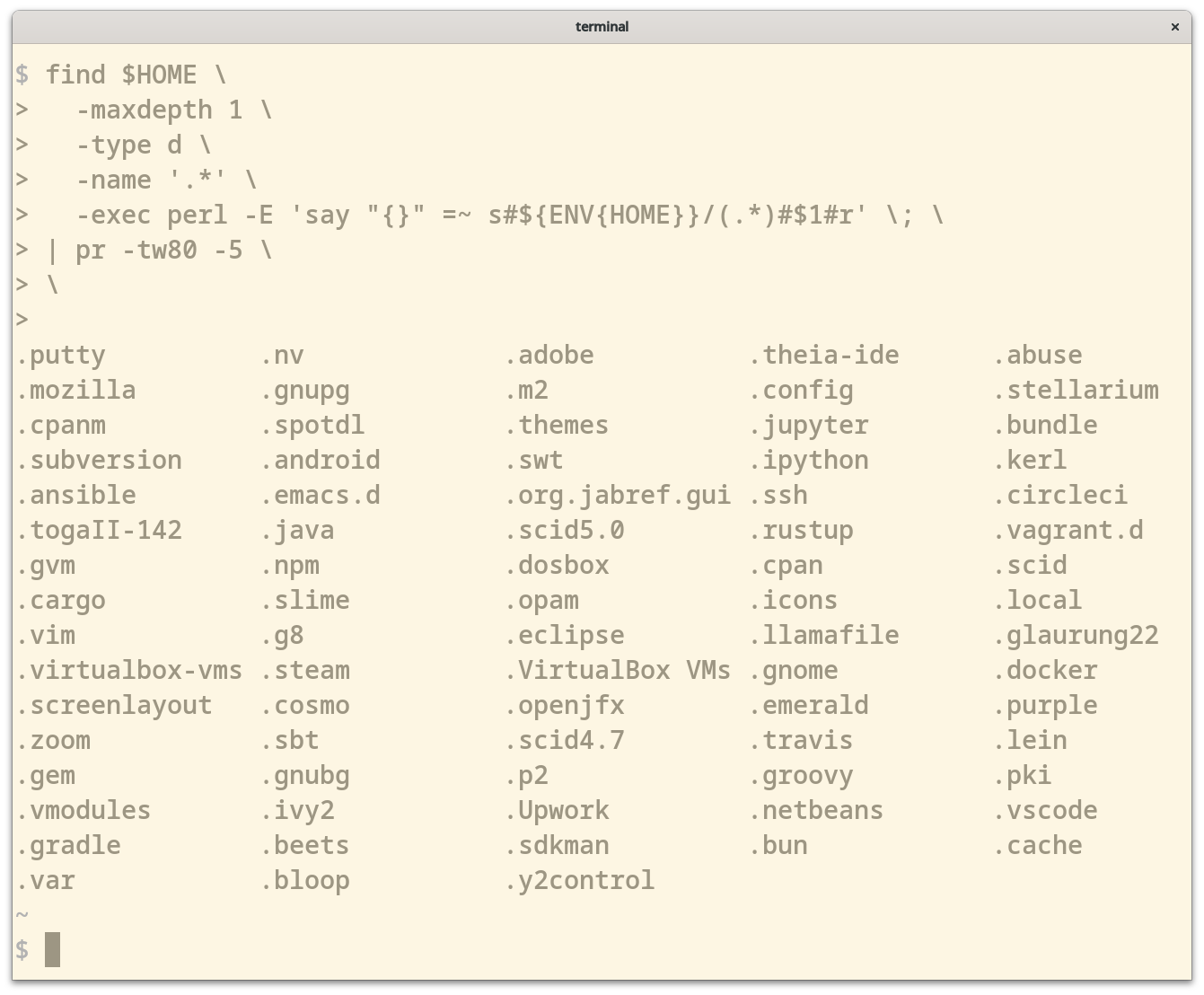

I didn’t like the capitalised names so configured xdg to use all lowercase letters. That’s why

~/optfits in pretty nicely.You’ve got a point re

~/.local/optbut I personally like the idea of having the important bits right in my home dir. Here’s my layout (which I’m quite used to now after all these years):$ ls ~ bin desktop doc downloads mnt music opt pictures public src templates tmp videos workspacewhere

binis just a bunch of symlinks to frequently used apps fromoptsrcis where i keep clones of repos (but I don’t do work insrc)workspaceis a where I do my work on git worktrees (based offsrc)

Thanks! So much for my reading skills/attention span 😂

Which Debian version is it based on?

133·1 year ago

133·1 year agoSomething that I’ll definitely keep an eye on. Thanks for sharing!

RE Go: Others have already mentioned the right way, thought I’d personally prefer

~/opt/goover what was suggested.

RE Perl: To instruct Perl to install to another directory, for example to

~/opt/perl5, put the following lines somewhere in your bash init files.export PERL5LIB="$HOME/opt/perl5/lib/perl5${PERL5LIB:+:${PERL5LIB}}" export PERL_LOCAL_LIB_ROOT="$HOME/opt/perl5${PERL_LOCAL_LIB_ROOT:+:${PERL_LOCAL_LIB_ROOT}}" export PERL_MB_OPT="--install_base \"$HOME/opt/perl5\"" export PERL_MM_OPT="INSTALL_BASE=$HOME/opt/perl5" export PATH="$HOME/opt/perl5/bin${PATH:+:${PATH}}"Though you need to re-install the Perl packages you had previously installed.

4·1 year ago

4·1 year agoThis is fantastic! 👏

I use Perl one-liners for record and text processing a lot and this will be definitely something I will keep coming back to - I’ve already learned a trick from “Context Matching” (9) 🙂

1·1 year ago

1·1 year agoThat sounds a great starting point!

🗣Thinking out loud here…

Say, if a crate implements the

AutomatedContentFlaggerinterface it would show up on the admin page as an “Automated Filter” and the admin could dis/enable it on demand. That way we can have more filters than CSAM using the same interface.

2·1 year ago

2·1 year agoI couldn’t agree more 😂

Except that, what the author uses is pretty much standard in the Go ecosystem, which is, yes, a shame.

To my knowledge, the only framework which does it quite seamlessly is Spring Boot which, w/ sane and well thought out defaults, gets the tracing done w/o the programmer writing a single line of code to do tracing-related tasks.

That said, even Spring’s solution is pretty heavy-weight compared to what comes OOTB w/ BEAM.

1·1 year ago

1·1 year agoUpdate 1

Thanks all for your feedback 🙏 I think everybody made a valid point that the OOTB configuration of 33 requests/min was quite useless and we can do better than that.

I reconfigured timeouts and probes and tuned it down to 4 HTTP GET requests/minute out of the box - see the configuration for details.

🌐 A pre-release version is available at lemmy-meter.info.

For the moment, it only probes the test instances

I’d very much appreciate your further thoughts and feedback.

2·1 year ago

2·1 year agoAgreed. It was a mix of too ambitious standards for up-to-date data and poor configuration on my side.

11·1 year ago

11·1 year agosane defaults and a timeout period

I agree. This makes more sense.

Your name will be associated with abuse forevermore.

I was going to ignore your reply as a 🧌 given it’s an opt-in service for HTTP monitoring. But then you had a good point on the next line!

Let’s use such important labels where they actually make sense 🙂

Thanks for the pointer! Very interesting. I actually may end up doing a prototype and see how far I can get.