- cross-posted to:

- tech@kbin.social

- cross-posted to:

- tech@kbin.social

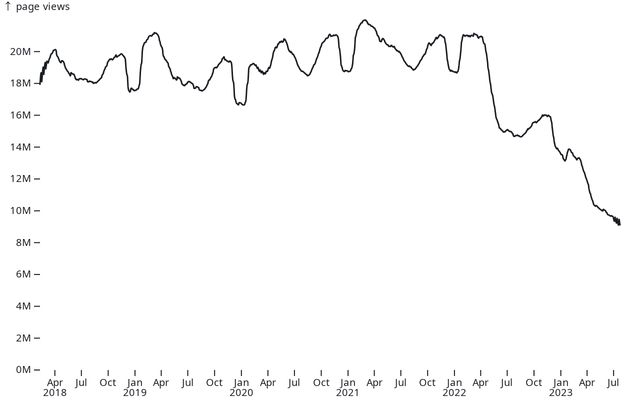

Over the past one and a half years, Stack Overflow has lost around 50% of its traffic. This decline is similarly reflected in site usage, with approximately a 50% decrease in the number of questions and answers, as well as the number of votes these posts receive.

The charts below show the usage represented by a moving average of 49 days.

What happened?

There is a lot of Stack Overflow hate in this thread. I never had a bad experience. I was always on there yelling at noobs, telling them to Google it, and linking to irrelevant questions. It was just wholesome fun that briefly dulled my crippling insecurities

So you never had a bad experience, just were actively causing bad experiences for others?

I think you just fell for quite an obvious case of sarcasm.

A “woosh” if you will.

Sorry for being autistic ig

It isn’t obvious unless it has the slash s!

We should leave the /s back on reddit

Sadly, it really is necessary if one wants to be sure nobody actually takes the sarcasm seriously. It’s hard for people to tell in a textual medium.

Heck, my style of humor in RL is often sarcasm or deliberately ludicrous comments and people still sometimes go “wait, really?” Even though they know me well.

I’m going to go without it from now on. I can handle clarifying myself if it’s absolutely necessary for someone.

Yeah but those people who take the sarcasm seriously are fools and you can’t make things foolproof.

Encouraging and putting up with hair-splitting lawyerly un-generous readings of comments is what leads to people just straight up interpreting any “Plus I’m being genuine here” messages as lies.

We need to trust our readers, else we end up in an echo chamber culture where any deviation from the Party line is interpreted as “disruptive person who must be banned to protect our community”.

These things are linked.

The ability to deliver and detect sarcasm without training wheels is a layer of communication we need and can’t afford to abandon, in order to maintain a productive conversational environment.

Yeah but those people who take the sarcasm seriously are fools and you can’t make things foolproof.

Or you know, have a legitimatly very hard time distinguishing it for actual reasons.

chinesescholarshadasimilarst anceagainstallkindofpunctuat ionclaimingtheabilitytodeliv eranddetectmeaningwithouttra iningwheelswasalayerofcommun icationpeopleneededandcouldn otaffordtoabandoninordertoma intainaproductiveconversatio nalenvironmentwithanyoneunab letoreflectuponanddiscernthe intendedmeaningbeingafoolnot worthyoftheloftymessageswrit tencommunicationwasintendedf ortodiscernhttps://en.m.wikipedia.org/wiki/Chinese_punctuation

(This is a lesson in history, so I’ll let the discerning reader to decide for themselves whether there is sarcasm contained in it)

Sarcasm

No “/s”, no sarcasm.

I’m pretty sure they were being sarcastic.

Rather than cultivate a friendly and open community, they decided to be hostile and closed. I am not surprised by this at all, but I am surprised with how long the decline has taken. I have a number of bad/silly experiences on stackoverflow that have never been replicated on any other platform.

Like what?

deleted by creator

Honestly I have a question I answered myself and was up for over 10 years with hundreds of views and votes only for the question to be marked as a duplicate for a question that verboten has nothing to do with the question I asked. Specifically I was working with canvas and svg and the question linked was neither thing. The other question is also 5 years newer so even if it were the same it would be a duplicate of mine, not the other way around.

Another one is a very high rated answer I gave was edited by a big contributor to add a participle several years after I wrote it and then marked as belonging to them now

Both times i issued a dispute only for it to be completely ignored. Eventually I used a scrubber bot to delete every contribution I ever made instead of letting random power mods just steal content on my high profile posts.

Can you give more context on the second one? Everyone can edit posts and it shows both the original poster as well as the most recent editor on the post. (I’m not defending SE. I dislike them too.)

All questions have been asked and all answers have been given

and copilot and chatgpt give good enough answers without being unfriendly

ChatGPT has no knowledge of the answers it gives. It is simply a text completion algorithm. It is fundamentally the same as the thing above your phone keyboard that suggests words as you type, just with much more training data.

Who cares? It still gives me the answers i am looking for.

Yeah it gives you the answers you ask it to give you. It doesn’t matter if they are true or not, only if they look like the thing you’re looking for.

An incorrect answer can still be valuable. It can give some hint of where to look next.

@magic_lobster_party I can’t believe someone wrote that. Incorrect answers do more harm than being useful. If the person asks and don’t know, how should he or she know it’s incorrect and look for a hint?

I don’t know about others’ experiences, but I’ve been completely stuck on problems I only figured out how to solve with chatGPT. It’s very forgiving when I don’t know the name of something I’m trying to do or don’t know how to phrase it well, so even if the actual answer is wrong it gives me somewhere to start and clues me in to the terminology to use.

In the context of coding it can be valuable. I produced two tables in a database and asked it to write a query and it did 90% of the job. It was using an incorrect column for a join. If you are doing it for coding you should notice very quickly what is wrong at least if you have experience.

Google the provided solution for additional sources. Often when I search for solutions to problems I don’t get the right answer directly. Often the provided solution may not even work for me.

But I might find other clues of the problem which can aid me in further research. In the end I finally have all the clues I need to find the answer to my question.

In my experience, with both coding and natural sciences, a slightly incorrect answer that you attempt to apply, realize is wrong in some way during initial testing/analysis, then you tweak until it’s correct, is very useful, especially compared to not receiving any answer or being ridiculed by internet randos.

Well if they refer to coding solution they’re right : sometimes non-working code can lead to a working solution. if you know what you’re doing ofc

How is that practically different from a user perspective than answers on SO? Either way, I still have to try the suggested solutions to see if they work in my particular situation.

At least with those, you can be reasonably confident that a single person at some point believed in their answer as a coherent solution

That doesn’t exactly inspire confidence.

What point are you trying to make? LLMs are incredibly useful tools

Yeah for generating prose, not for solving technical problems.

You’ve never actually used them properly then.

not for solving technical problems

One example is writing complex regex. A simple well written prompt can get you 90% the way there. It’s a huge time saver.

for generating prose

It’s great a writing boilerplate code so I can spend more of my time architecturing solutions instead of typing.

the good thing if it gives you the answer in a programming language is that its quite simple tontestvif the output is what you expect, also a lot of humans hive wrong answers…

There was a story once that said if you put an infinite number of monkeys in front of an infinite number of typewriters, they would eventually produce the works of William Shakespeare.

So far, the Internet has not shown that to be true. Example: Twitter.

Now we have an artificial monkey remixing all of that, at our request, and we’re trying to find something resembling Hamlet’s Soliloquy in what it tells us. What it gives you is meaningless unless you interpret it in a way that works for you – how do you know the answer is correct if you don’t test it? In other words, you have to ensure the answers it gives are what you are looking for.

In that scenario, it’s just a big expensive rubber duck you are using to debug your work.

There’s a bunch of people telling you “ChatGPT helps me when I have coding problems.” And you’re responding “No it doesn’t.”

Your analogy is eloquent and easy to grasp and also wrong.

Fair point, and thank you. Let me clarify a bit.

It wasn’t my intention to say ChatGPT isn’t helpful. I’ve heard stories of people using it to great effect, but I’ve also heard stories of people who had it return the same non-solutions they had already found and dismissed. Just like any tool, actually…

I was just pointing out that it is functionally similar to scanning SO, tech docs, Slashdot, Reddit, and other sources looking for an answer to our question. ChatGPT doesn’t have a magical source of knowledge that we collectively also do not have – it just has speed and a lot processing power. We all still have to verify the answers it gives, just like we would anything from SO.

My last sentence was rushed, not 100% accurate, and shows some of my prejudices about ChatGPT. I think ChatGPT works best when it is treated like a rubber duck – give it your problem, ask it for input, but then use that as a prompt to spur your own learning and further discovery. Don’t use it to replace your own thinking and learning.

Even if ChatGPT is giving exactly the same quality of answer as you can get out of Stack Overflow, it gives it to you much more quickly and pieces together multiple answers into a script you can copy and work with immediately. And it’s polite when doing so, and will amend and refine its answers immediately for you if you engage it in some back-and-forth dialogue. That makes it better than Stack Overflow and not functionally similar.

I’ve done plenty of rubber duck programming before, and it’s nothing like working with ChatGPT. The rubber duck never writes code for me. It never gives me new information that I didn’t already know. Even though sometimes the information ChatGPT gives me is wrong, that’s still far better than just mutely staring back at me like a rubber duck does. A rubber duck teaches me nothing.

“Verifying” the answer given by ChatGPT can be as simple as just going ahead and running it. I can’t think of anything simpler than that, you’re going to have to run the code eventually anyway. Even if I was the world’s greatest expert on something, if I wrote some code to do a thing I would then run it to see if it worked rather than just pushing it to master and expecting everything to be fine.

This doesn’t “replace your own thinking and learning” any more than copying and pasting a bit of code out of Stack Overflow does. Indeed, it’s much easier to learn from ChatGPT because you can ask it “what does that line with the angle brackets do?” or “Could you add some comments to the loop explaining all the steps” or whatever and it’ll immediately comply.

I honestly believe people are way overvaluing the responses ChatGPT gives.

For a lot of boilerplating scenarios or trying to resolve some pretty standard stuff, it’s good.

I had an issue a while back with QueryDSL running towards an MSSQL instance, which I tried resolving by asking ChatGPT some pretty straightforward questions regarding the tool. Without going too much into detail, I basically got stuck in a loop where ChatGPT kept suggesting solutions that were not viable at all in QueryDSL. I pointed it out, trying to point out why what it did was wrong and it tried correcting itself suggesting the same broken solutions.

The AI is great until whatever it has been taught previously doesn’t cover your situation. My solution was a bit of digging in google away, which helpfully made me resolve the issue. But had I been stuck with only ChatGPT I’d still be going around in loops.

It really doesn’t work as a replacement for google/docs/forums. It’s another tool in your belt, though, once you get a good feel for its limitations and use cases; I think of it more like upgraded rubber duck debugging. Bad for getting specific information and fixes, but great for getting new perspectives and/or directions to research further.

I agree! It has been a great help in those cases.

I just don’t believe that it can fullfill the actual need for sites like StackOverflow. It probably never will be able to either, unless we manage to make it learn new stuff without reliable sources like SO, while also allowing it to snap up these obscure answers to problems without burying it in tons of broken solutions.

ChatGPT is great for simple questions that have been asked and answered a million times previously. I don’t see any downside to these types of questions not being posted to SO…

Exactly this. SO is now just a repository of answers that ChatGPT and it’s ilk can train against. A high percentage is questions that SO users need answers to are already asked and answered. New and novel problems arise so infrequently thanks to the way modern tech companies are structured that an AI that can read and train on the existing answers and update itself periodically is all most people need anymore… (I realize that was rambling, I hope it made sense)

So soon they will start responding with “this has been asked before, let’s change the subject”

🤣 🤣

Exactly! It will all come full circle

yes! this! is chatgpt intelligent: no! does it more often than not give good enough answers to daily but somewhat obscure ans specific programming questions: yes! is a person on SO intelligent: maybe. do they give good enough answers to daily but somewhat obscure ans specific programming questions: mostly

Its not great for complex stuff, but for quick questions if you are stuck. the answers are given quicker, without snark and usually work

A repository of often (or at least not seldom) outdated answers.

@focus Is that the reason why we get more and more AI written articles?

No, thats because of capitalism

Are they all linked in a “duplicate of” circle yet?

Amazing how much hate SO receives here. As knowledge base it’s working super good. And yes, a lot of questions have been answered already. And also yes, just like any other online community there’s bad apples which you have to live with unfortunately.

Idolizing ChatGPT as a viable replacementis laughable, because it has no knowledge, no understanding, of what it says. It’s just repeating what it “learned” and connected. Ask about something new and it will simply lie, which is arguably worse than an unfriendly answer in my opinion.

The advice on stack overflow is trash because “that question has been answered already” yeah, it was answered 10 years ago on a completely different version. That answer is depreciated.

Not to mention the amount of convoluted answers that get voted to the top and then someone with two upvotes at the bottom meekly giving the answer that you actually needed.

It’s like that librarian from the New York public library who determined whether or not children’s books would even get published.

She gave “good night moon” a bad score and it fell out of popularity for 30 years after the author died.

I don’t think that’s entirely fair. Typically answers are getting upvoted when they work for someone. So the top answer worked for more people than the other answers. Now there can be more than one solution to a problem but neither the people who try to answer the question, nor the people who vote on the answers, can possibly know which of them works specifically for you.

ChatGPT will just as well give you a technically correct, but for you wrong, answer. And only after some refinement give the answer you need. Not that different than reading all the answers and picking the one which works for you.

Of course older answers are going to have more uovotes if they technically work. That doesn’t mean it’s the best answer. It’s possible that someone would like to make a new, better, answer and is unable to because of SA restrictions on posting.

The kinds of people who post on SA regularly aren’t going to be the people with the best answers.

On top of that SA gives badges for uovoting and it’s possible other benefits I’m unaware of.

As we saw with reddit, uovotes systems can be inherently flawed, we have no way of knowing if that uovote is genuine.

Explains the huge swaths of bad advice shared on Reddit though. It’s shared confidently and with a smile. Positive vibes only!

What’s “Reddit”?

(I removed all my advice from there when it was considered “violent content” and “sexualization of minors”… go find your 3d printing, programming, system management and chemistry tips elsewhere, I did it anyway)

I hear you. I firmly believe that comparing the behavior of GPT with that of certain individuals on SO is like comparing apples to oranges though.

GPT is a machine, and unlike human users on SO, it doesn’t harbor any intent to be exclusive or dismissive. The beauty of GPT lies in its willingness to learn and engage in constructive conversations. If it provides incorrect information, it is always open to being questioned and will readily explain its reasoning, allowing users to learn from the exchange.

In stark contrast, some users on SO seem to have a condescending attitude towards learners and are quick to shut them down, making it a challenging environment for those seeking genuine help. I’m sure that these individuals don’t represent the entire SO community, but I have yet to have a positive encounter there.

While GPT will make errors, it does so unintentionally, and the motivation behind its responses is to be helpful, rather than asserting superiority. Its non-judgmental approach creates a more welcoming and productive atmosphere for those seeking knowledge.

The difference between GPT and certain SO users lies in their intent and behavior. GPT strives to be inclusive and helpful, always ready to educate and engage in a constructive manner. In contrast, some users on SO can be dismissive and unsupportive, creating an unfavorable environment for learners. Addressing this distinction is vital to fostering a more positive and nurturing learning experience for everyone involved.

In my opinion this is what makes SO ineffective and is largely why it’s traffic had dropped even before chat GPT became publicly available.

Edit: I did use GPT to remove vitriol from and shorten my post. I’m trying to be nicer.

I think I see a core issue highlighted in your comment that seems like a common theme in this comment section.

At least from where I’m sitting, SO is not and has never been a place for learning, as in a substitute for novices learning by reading a book or documentation. In my 12-year experience with it, I’ve always seen it as a place for professionals and semi-professionals of various experience and overlap sharing answers typically not found in the manual, which speeds up the pace of investigations and work by filling eachother’s gaps. Not a place where people with plenty of time on their hands and/or knack for teaching go to teach novices. Of course there are those people there too but that’s been rare occurrence in my experience. And so if a person expects to get a nice lesson instead of a terse answer from someone with 5 minutes or less, those expectations will be perpetually broken. For me that terse answer is enough more often than not and its accuracy is infinitely more important than the attitude used to say it.

I expect a terse answer. I also am a professional. My experience with SO users is that they do not behave professionally. There’s not much more to it.

I don’t want to compare the behavior, only the quality of the answers. An unintentional error of ChatGPT is still an error, even when it’s delivered with a smile. I absolutely agree that the behavior of some SO users is detrimental and pushes people away.

I can also see ChatGPT (or whatever) as a solution to that - both as moderator and as source of solutions. If it knows the solution it can answer immediately (plus reference where it got it from), if it doesn’t know the solution it could moderate the human answers (plus learn from them).

That’s fair. You don’t have to compare the behavior. There’s plenty of that in the thread already.

I think the issue is how people got to Stack Overflow. People generally ask Google first, which hopefully would take you somewhere where somebody has already asked your question and it has answers.

Type a technical question into Google. Back in the day it would likely take you to Experts Exchange. Couple of years later it would take you to Stack Overflow. Now it takes you to some AI generated bullshit that scraped something that might have contained an answer, but was probably just more AI generated bullshit.

Either their SEO game is weak, they stopped paying Google as much for result placement, or they’ve just been overwhelmed with limitless nonsense made by bots for the sole purpose of selling advertising space that other bots will look at.

Or maybe I’m wrong and everybody is just asking ChatGPT their technical questions now, in which case god fucking help us all…

It gives decent answer and is still relatively at the top. However, if you need to ask something that isn’t there you’re going to be either intimidated or your question is going to be left unanswered for months.

I’m more inclined to ask questions on sites like Reddit, because it’s something I’m familiar with and there’s far better chance of getting it answered within couple hours.

ChatGPT is also far superior because there’s a feedback loop almost in real time. Doesn’t matter if it gives the wrong answer, it gives you something to work with and try, and you can keep asking for more ideas. That’s much preferable than having to wait for months or even years to get an answer

Ya im not sure what the deal with the hate is. ChatGPT gives you an excellent starting point and if you give it good feedback and direction you can actually churn out some pretty decent code with it.

Understandably, it has become an increasingly hostile or apatic environment over the years. If one checks questions from 10 years ago or so, one generally sees people eager to help one another.

Now they often expect you to have searched through possibly thousands of questions before you ask one, and immediately accuse you if you missed some – which is unfair, because a non-expert can often miss the connection between two questions phrased slightly differently.

On top of that, some of those questions and their answers are years old, so one wonders if their answers still apply. Often they don’t. But again it feels like you’re expected to know whether they still apply, as if you were an expert.

Of course it isn’t all like that, there are still kind and helpful people there. It’s just a statistical trend.

Possibly the site should implement an archival policy, where questions and answers are deleted or archived after a couple of years or so.

human nature remembers negative experiences much better than positive, so it only takes like 5% assholes before it feels like everyone is toxic.

True that! and a change from 2% to 5% may feel much larger than that.

The worst is when you actually read all that questions and clearly stated how they don’t apply and that you already tried them and a mod is still closing your question as a duplicate.

I can’t wait to read gems like “Answered 12/21/2005 you moron. Learn to search the website. No, I wont link it for you, this is not a Q&A website”.

Answers from 2005 that may not be remotely relevant anymore, especially if a language has seen major updates in the TWENTY YEARS since!

More important for frameworks than languages, IMO. Frameworks change drastically in the span of 5-10 years.

🤣

No, they shouldn’t be archived. I say that because technology can change. At some point they added a new sort method which favors more recent upvotes and it helps more recent answers show above old ones with more votes. This can happen on very old posts where everyone else might not use the site anymore. We shouldn’t expect the original asker to switch the accepted answer potentially years down the line.

There’s plenty of things wrong with SE and their community but I don’t think this is one that needs to change.

Honestly.

Stackoverflow is a horrible place to ask anything.

I have had 100% legit, well documented questions, closed as duplicate of unrelated other question.

Its… honestly, just not a friendly place to go. Full of a bunch of assholes…

Most of the answers actually suck too. Many times, you will find the correct answer downvoted, and incorrect or bad answers upvoted.

I found this when I was in college too. I only ever asked a few questions and they were all closed as duplicates and never found why the answers from those threads solved anything closed to what I was asking. Lol

A few months ago I had a 7 year old question of mine closed as a duplicate of a 5 year old question. Just another sign that StackOverflow mods are hard at work.

I don’t even know how to answer questions.

I lost my old account and now I don’t have points on new account and I can not do anything. I can not vote, I can not comment, I can not answer questions. So I just dropped it. I can not even thank (by liking or upvoting) a person whose answer helped me.

I believe others have similar experience.

I feel you. At this point, its a circle-jerk of who can close tickets with the most non-helpful, ridiculous responses…

Google search going to absolute shit is what happened

I also attribute most of this to google. I am used to google a coding question and getting 10 SO results i can quickly scan through. Since a year I only get blogposts about the general behaviour of the thing i was googling.

This is the most likely explanation. It doesn’t make sense to have such a dramatic dropoff in user behavior without an obvious trigger.

I don’t understand. Google search has its issues for sure, but it always shows stack overflow highly when I search programming things.

SO is such a miserable and toxic place that oftentimes I’d rather read more documentation or reach out to someone elsewhere like Discord. And I would never post a question there or comment there.

I’d rather read the docs than just about anything. I love good documentation. I wanna know how and why things work.

The problem is that basically nobody has good docs. They are almost all either incomplete or unreadable.

A lot of companies won’t employ technical writers, who exist to make good, thorough, complete and well-presented documentation… they rather assume their engineers can just write the docs.

And no, no they can’t… very few engineers study the principles of effective communication. They may understand things, but they can’t explain them.

That’s fair. At my company we have technical writers for the external docs and internal docs are usually written by whoever has worked on something and got frustrated that nobody in the company could give them a high level overview, and they had to go through the code for a couple hours.

Tbf though, I’ll take docs that aren’t written super well that tell me how things from our internal libraries should be used. Or just comments. I’ll take comments telling me WHY we are doing something.

I don’t expect our internal docs to be MSDN docs. But I like to read an overview of at least the workflow before I jump into updating a large project.

While I agree, writing good docs is hard for a very intangible benefit. Honestly, it feels like doing the same work twice, with the prospect of doing it again and again in the future as the software is updated. It’s a little demoralizing.

It is hard, I agree. I’m not very good at it myself. But even semi-decent docs are better than googling around or stepping through a decompiled package.

And it’s super useful to new developers, and would have saved me a lot of time and frustration when I was new.

It’s hostile to new users and when you do ask you will likely not get answer might get scolded or just get closed as duplicate. Then there is the fact that most has answers doesn’t matter if it’s outdated or just bad advice. Pretty much everything has GitHub now. Usually I just go raise the question there if I have a genuine question get an answer from the developers themselves. Or just go to their website api/ library doc they have gotten good lately. Then finally recent addition with chatgpt you can ask just about any stupid question you have and maybe it may give some idea to fix the problem you encounter. Pretty much the ultimate rubber duck buddy.

There’s an open source equivalent at https://codidact.com/

Thank you! never heard of, it looks very interesting!

chatGPT doesn’t chastize me like a drill instructor whenever I ask it coding problems.

It’s funny because if you look at the numbers it looks like traffic started to go down before chat GPT was actually released to the public, indicating that maybe people thought that the site was too much of a pain in the ass to deal with before that and GPT is just the nail in the coffin.

Personally, of all the attempts I’ve had it positive interactions on that site I’ve had only one and at this point I treat it as a read-only site because it’s not worth my time arguing pedants just to get a question answered.

If I went to the library and all the librarians were assholes I probably wouldn’t go to that library anymore either.

It just invents the answer out of thin air, or worse, it gives you subtle errors you won’t notice until you’re 20 hours into debugging

so, like SO?

I agree with you that it sometimes gives wrong answers. But most of the time, it can help better than StackOverflow, especially with simple problems. I mean, there wouldn’t be such an exodus from StackOverflow if ChatGPT answers were so bad right ?

But, for very specific subjects or bizarre situations, it obviously cannot replace SO.

And you won’t know if the answers it gave you are OK or not until too late, seems like the Russian Roulette of tech support, it’s very helpful until it isn’t

Depending on Eliza MK50 for tech support doesn’t stop feeling absurd to me

Sounds the same as believing a random stranger.

How many SO topics have you seen with only one, universally agreed upon solution?

How do you know the answer that gets copied from SO will not have any downsides later? Chatgpt is just a tool. I can hit myself in the face with a wrench as well, if I use it in a dumb way. IMHO the people that get bitten in the ass by chatgpt answers are the same that just copied SO code without even trying to understand what it is really doing…

Just like real humans.

It’s too much to attribute to any one effect. 50% is a lot for a website of this size (don’t forget that Lemmy exploded from a migration of <5% Reddit usershare). Let’s KISS by attributing likely causes in order of magnitude:

- ChatGPT became the world’s fastest growing website in a single month and it’s actually half-decent at being a code tutor

- ChatGPT bots got unleashed on SO and diluted a lot of SO’s comparative advantages

- Stack Overflow moderators went on strike, which further damaged content quality

- Structurally speaking, SO is an environment which tends to become more elitist over time. As the userbase becomes progressively more self-selective, the population shrinks.

- The SO format requires a stream of novel questions, but novel questions generally get rarer over time

- Developer documentation has generally improved over time. On SO, asking about a well-documented thing is a short-circuit pathway to getting RTFM’d & discussion locked

ChatGPT came out after the beginning of the trend in the charts. That falsifies the first 2 points of the hypothesis. The strike happened a month ago so that’a gone too. 4, 5 and 6 do not appear as abrupt processes even if we assume they’re true so they likely don’t explain it. There must be something else that’s happened that could cause such a large and abrupt change before any of the above happened. I bet on a change in the major source of traffic - Google.

You’ve assumed that I want to explain the root cause of the initial decline. This is not the case. Historically, SO has seen several periods of decline. What I’m actually addressing is the question of why the decline has not stopped, because the sustained nature of this decline is what makes it unusual. If you look at the various charts, you can see a brief rally which gets cut off in late Winter 2022 – this lines up rather nicely with the timing of ChatGPT’s release, I feel.

Let’s ignore that. Tell me more about your Google angle: what’s the basis of your hypothesis?

I’m not who you were speaking to, but back when I used to read it occasionally, the stack overflow blog repeatedly mentioned that the vast majority of its traffic comes from Google. If the vast majority of your traffic comes from Google and then your traffic quantity changes dramatically, it’s reasonable to look to the source of your traffic.

Thank you for doing my work for me. It’s just Occam’s razor.

but github copilot came out right around that time…

In my experience many of the answers have become out of date. It’s gradually becoming an archive of the old ways of doing things for many languages / frameworks.

Questions are often closed as a duplicate when the linked question doesn’t apply anymore. It’s full of really bad ways of doing things.

I’m not really sure of the solution at this point.

Also ChatGPT.

It’s a last resort for me nowadays.

Yeah, this is what they get and deserve. They rose by providing meaningful, helpful, and technically adept answers to questions. Then they encouraged an abusive moderator culture that marks questions as duplicate, linking to unrelated questions. They also still do not offer easy ways for the knowledge base to be updated as things over time change. Now the company abusing their abusive moderators, causing them to basically go on strike right now.

Here’s hoping the next thing doesn’t suck as much ass as Stack Exchange ultimately has.

https://en.m.wikipedia.org/wiki/Fediverse

Based on that, there is no “q&a” type of Fediverse software (a clear answer and a clear “voted best” answer).

Stack overflow had a huge number of “mod tools” to help curate the content (gold nuggets) given. They did not do the step of aggregating content (gold ingots) like Wikipedia has. The marking as duplicate could and should be tempered by “due diligence” or “age of the last time this was asked”, but how it is implemented is up to them.

To be fair™ they did at least do a little bit to deal with the existing answers becoming obsolete by changing the default answer sorting. The “new” (it’s already been at least a year IIRC) sorting pushes down older answers and allows newer answers to rise to the top with fewer votes. That still doesn’t fix the issue that the accepted answer likely won’t change as new ways of doing things become standard, but at least it’s a step in the right direction.

One thing I’ve always wondered about stack overflow is why is there only one accepted answer ever possible even though this is programming and there are many different ways of doing any given thing?

Ironic, since one of ChatGPT’s biggest weaknesses is that it’s an archive of the old ways of doing things. You can’t filter by time on ChatGPT, and ChatGPT isn’t being retrained on the latest knowledge live. These aren’t inherent to GPT, so it’s possible that a future iteration will overcome these issues.

On ChatGPT, if a solution doesn’t work, you can ask in real time for a different one. On SO, your post just gets locked for being a duplicate.

Asking in real-time wouldn’t help in this scenario (e.g. some mirror is no longer accessible). If anything, it’d just lead you further astray and waste more time, because GPT’s knowledgebase doesn’t have this knowledge.

Why is everyone saying this is because Stack Overflow is toxic? Clearly the decline in traffic is because of ChatGPT. I can say from personal experience that I’ve been visiting Stack Overflow way less lately because ChatGPT is a better tool for answering my software development questions.

I was going to say ChatGPT.

I think the smugness of StackOverflow is still part of it. Even if ChatGPT sometimes fabricates imaginary code, it’s tone is flowery and helpful, compared to the typical pretentiousness of Stackoverflow users.

deleted by creator

In my experience, ChatGPT is very good at interpreting documentation. So even if it hasn’t been asked on stack overflow, if it’s in the documentation that ChatGPT has indexed (or can crawl with an extension) you’ll get a pretty solid answer. I’ve been asking it a lot of AWS questions because it’s 100x better than deciphering the ancient texts that amazon publishes. Although sometimes the AWS docs are just wrong anyway.

Also, you can have it talk like a catgirl maid, so I find that’s particularly helpful as well.

The timing doesn’t really add up though. ChatGPT was published in November 2022. According to the graphs on the website linked, the traffic, the number of posts and the number of votes all already were in a visible downfall and at their lowest value of more than 2 years. And this isn’t even considering that ChatGPT took a while to get picked up into the average developer’s daily workflow.

Anyhow though, I agree that the rise of ChatGPT most likely amplified StackOverflow’s decline.

Half the time when I ask it for advice, ChatGPT recommends nonexistent APIs and offers examples in some Frankenstein code that uses a bit of this system and a bit of that, none of which will work. But I still find its hit rate to be no worse than Stack Overflow, and it doesn’t try to humiliate you for daring to ask.

It depends on what sort of thing you’re asking about. More obscure languages and systems will result in hallucinated APIs more often. If it’s something like “how do I sort this list of whatever in some specific way in C#” or “can you write me a regex for such and such a task” then it’s far more often right. And even when ChatGPT gets something wrong, if you tell it the error you encountered from the code it’ll usually be good at correcting itself.

I find that if it gets it wrong in the first place, its corrections are often equally wrong. I guess this indicates that I’ve strayed into an area where its training data is not of good quality.

Yeah, if it’s in a state where it’s making up imaginary APIs whole cloth then in my experience you’re asking it for help with something it just doesn’t know enough about. I get the best results when I’m asking about popular stuff (such as “write me a python script to convert wav files to mp3” - it’ll know the right APIs for that sort of task, generally speaking). If I’m working on something that’s more obscure then sometimes it’s better to ask ChatGPT for generalized versions of the actual question. For example, I was tinkering with a mod for Minetest a while back that was meant to import .obj models and convert them into a voxelized representation of the object in-game. ChatGPT doesn’t know Minetest’s API very well, so I was mostly asking it for Lua code to convert the .obj into a simple array of voxel coordinates and then doing the API stuff to make it Minetest-specific for myself. The vector math was the part that ChatGPT knew best so it did an okay job on its part of the task.

Your follow up question should be for ChatGPT to write those APIs for you.

Over the last five years, I’d click a link to Stack Overflow while googling, but I’ve never made an account because of the toxicity.

But yeah, chatGPT is definitely the nail in the coffin. Being able to give it my code and ask it to point out where the annoying bug is… is amazing.